Featured Article

Software technology from Keyno that generates a dynamic CVV2 number reduced card-not-present (CNP) fraud to 0.57 basis points (bps) on $193 million in purchase volume for the 15 card issuers that participated in a Visasponsored test concluded November 2025. This was a 97% reduction from fraud tied to cards that use only a static CVV2 number—17.06 bps per every $100 in volume. Participating issuers were from North America, Europe, Latin America and the Caribbean, the Middle East and Africa.

Keyno’s software does more than fight fraud. Issuers can also use it to place parameters on card spending, validate 3DS transactions, autofill fields at checkout, activate an app, confirm that cardholders are in possession of their card, enable click to pay and manage the life cycle of a merchant or device token.

Most recently, Keyno worked with Visa in the Bahamas for their client Fidelity Bank. The bank deployed two of seven available use cases for Keyno technology—Tap to Confirm and Tap to Activate.

In Tap to Confirm, cardholders tap Visa cards on their own mobile phones and use Fidelity’s banking app and Keyno technology to verify their identity prior to enrolling for dynamic CVV2 and 3D Secure. The bank uses 3D Secure to identify cardholders who initiate high-value transactions.

The bank will use Keyno’s Tap to Activate to let cardholders instantly activate new Visa cards by tapping those cards on mobile phones with Fidelity’s banking app. This eliminates phone calls or activation codes sent to customer service centers.

Keyno provides an all-in-one SDK that issuers can use to create the seven use cases within their mobile banking apps. The SDK supports generating a digital card display with a full primary account number or no number at all. Issuers pay Keyno on a per-transaction basis.

FIS, the largest third-party provider of card account processing services worldwide, has become a Keyno partner. It will be live with the tap-based card authentication service later this year in the US.

Card issuers can also purchase Keyno turnkey services through IBM Cloud for Financial Services.

INTERVIEWED FOR THIS ARTICLE Robert Steinman is Chief Executive Officer at Keyno in Laguna Beach, California, robert.steinman@keyno.io, www.keyno.io.

Articles in this Issue

Charts, Tables and Graphs in this Issue

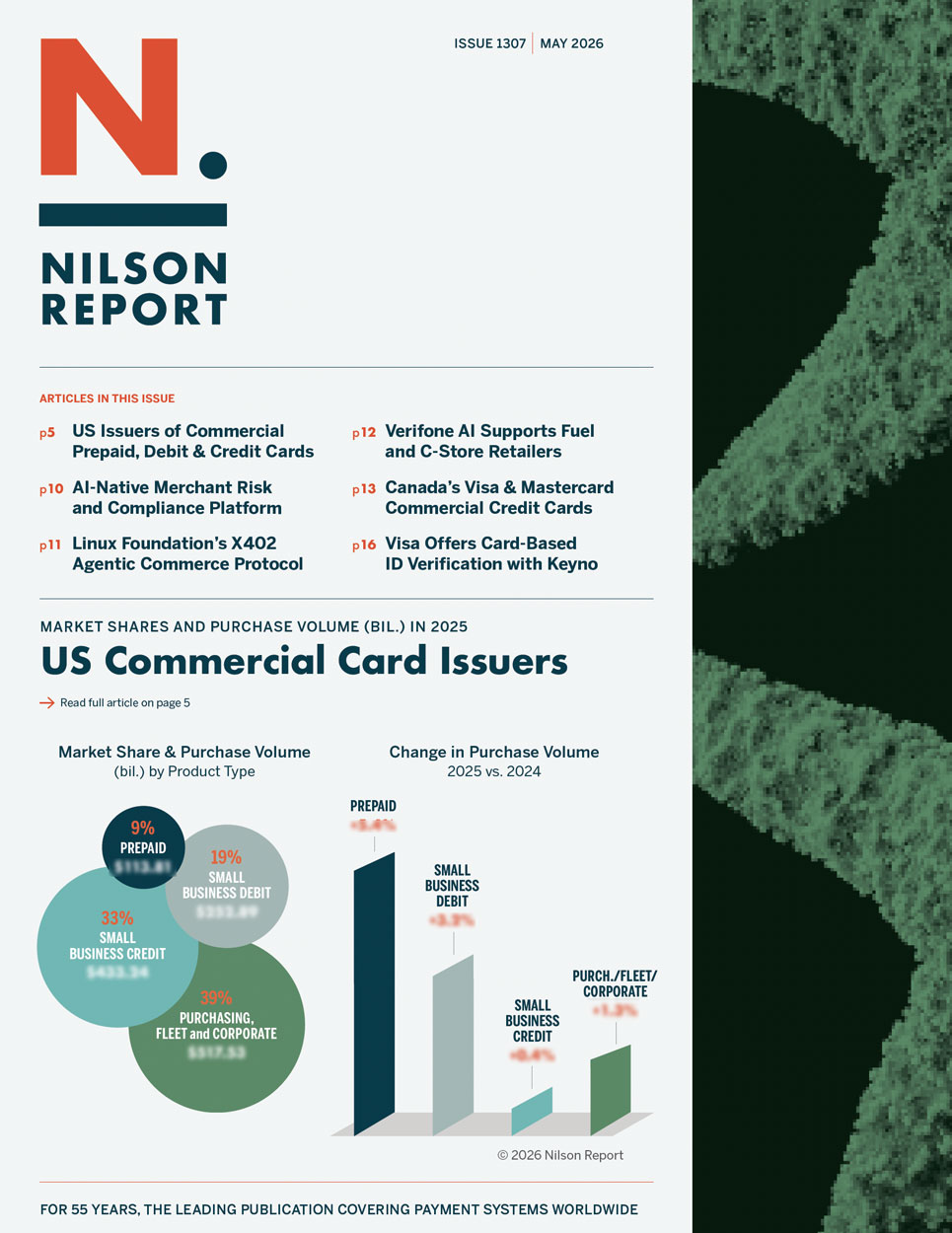

US Commercial Card Issuers 2025—Market Share & Purchase Volume by Product Type

US Commercial Card Issuers—Change in Purchase Volume 2025 vs. 2024

Top Commercial Card Issuers—2025

Best 5-Year Growth by Product—US Commercial Card Issuers 2025

Corporate Cards—2025

Commercial Prepaid Cards—2025

Purchasing & Fleet Cards—2025

Small Business Credit Cards—2025

Small Business Debit Cards—2025

US Visa and Mastercard Commercial Card Issuers Ranked by Purchase Volume in 2025

All Commercial Credit Cards—Canada 2025

Corporate Cards—Canada 2025

Purchasing and Fleet Cards—Canada 2025

Small Business Credit Cards—Canada 2025

Investments & Acquisitions—April 2026